Implications for Videoconferencing over Satellite

Oct 23, 2020

Now more than ever videoconferencing has become an important part of our business and personal lives. Even before the pandemic arrived in early 2020, more businesses were moving towards only meeting virtually. With international travel limited, companies are relying more and more on video to communicate with both staff and clients.

As the quality of video has improved, so too have the bandwidth requirements to be able to get an acceptable quality video stream. Bandwidth for applications such as YouTube can range from 200 kbps to many Mbps depending on the resolution. Most videoconferencing applications today require about 300 kbps for SD or Standard Definition quality. Given that most broadband is shared, 512 kbps of bandwidth in both directions is the minimum required for a generally consistent video conference session, depending on how much other traffic is active. If guaranteed video quality is required, then CIR (committed information rate) to support each session, and QoS (quality of service) prioritization will be necessary. With multiple sessions, the bandwidth needs can add up quickly.

Until recently when a client would have one call connecting with multiple different locations, the only way to avoid a large bandwidth bill was to invest in a videoconferencing bridge. A third party would either create a bridge at a client’s central location, or rent a bridge elsewhere; for a single connection point for the call. No matter how many attendees a meeting would have they’d all be routed through the bridge. This meant that you would only have to allocate enough bandwidth for a single video stream. This saved money for customers, even with the expense of using the third party, as it meant the bandwidth would only have to support one connection.

Obviously with larger companies like Google and Microsoft now part of the videoconferencing market with Meet and Teams; this bridging service has largely been appropriated by these vendors. If these applications are used by a business the bridge is now run by Google or Microsoft from the cloud. Where before hardware would have to be installed, or capacity rented from a third-party bridge provider, today, it is all becoming cloud-based. Now if training is being delivered around the world, each location need only worry about their single connection to the cloud, rather than to every organisation connected to the call. While this isn’t great for sellers of videoconferencing bridges, it’s fantastic news for the consumer.

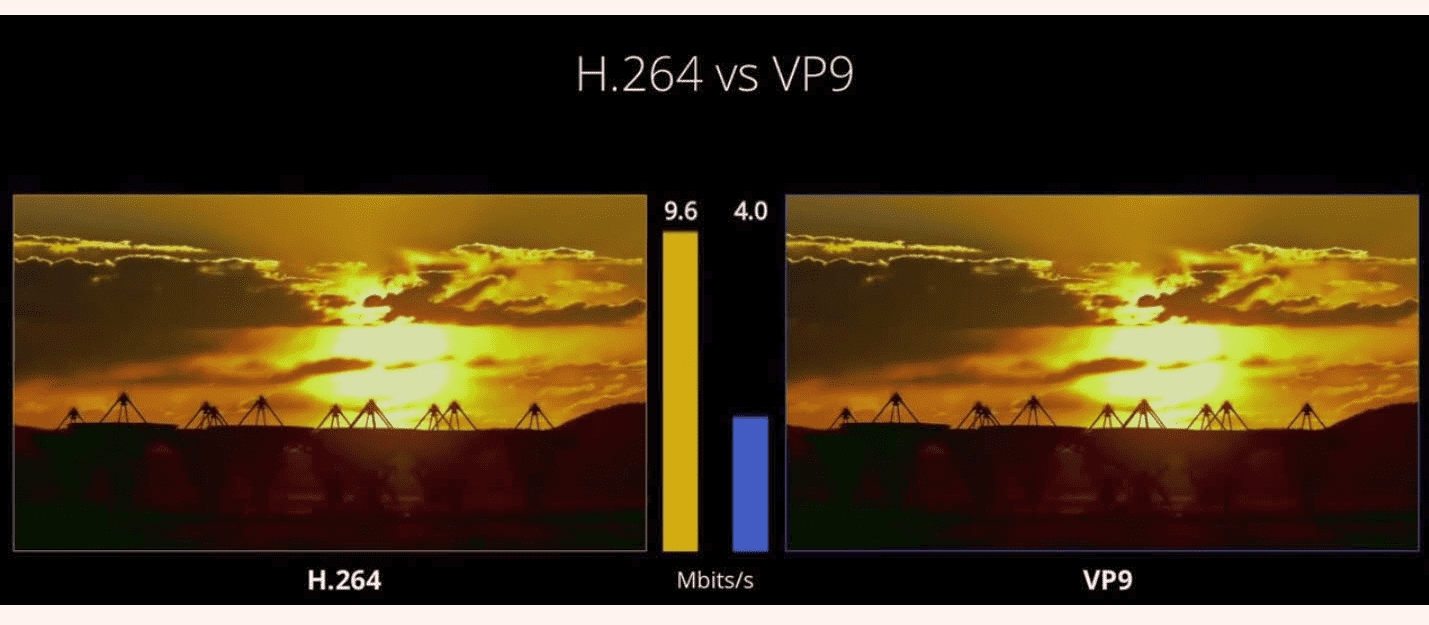

On top of this advancement of technology Google Meet has also moved to the VP9 codec for streaming of video content. This Codec is 20% more efficient than the normal or typical H.264 video codec. What this means for the consumer is that while both video codecs can generate the same quality of image on a video, the VP9 uses considerably less bandwidth to get the end result. A fantastic example is seen on this video:

⠀

⠀

This means that the use of a video conferencing solution based on the VP9 codec will need 20% less bandwidth on your link budget for the same video quality.

This does not help with multiple concurrent calls from one location, but Nvidia has recently showcased research that will dramatically cut bandwidth requirements for video calls. (petapixel.com/2020/10/06/nvidia-uses-ai-to-slash-bandwidth-on-video-calls/)

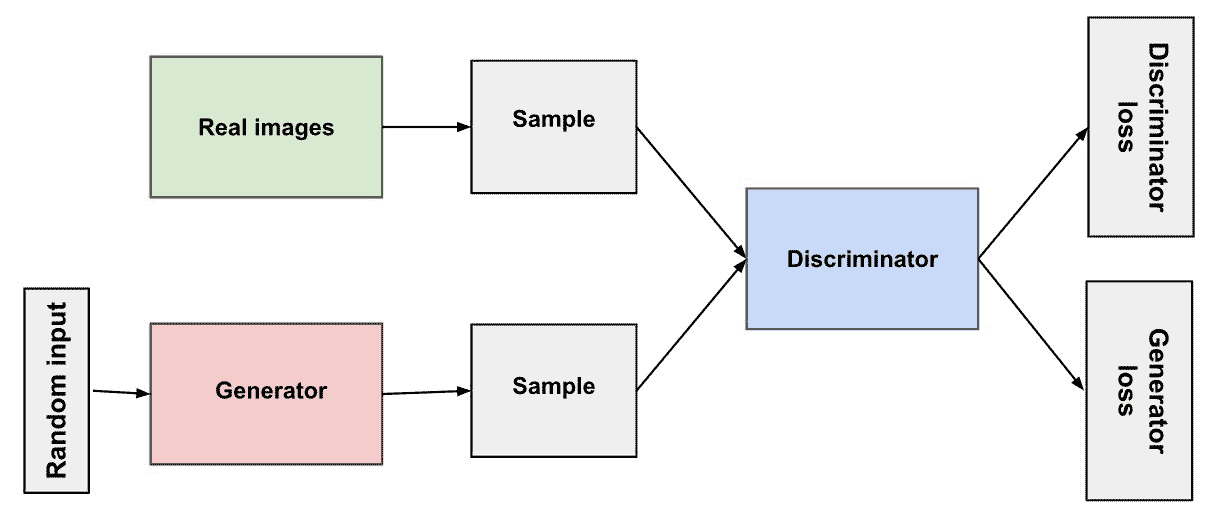

The Codec operates using AI which replaces the common H.264 video codec with a neural network. Usually a video call will send encoded frames of the video image along the link, but now the AI uses a reference picture of the caller and specific reference points around the eyes, nose, and mouth. The neural network (or specifically a generative adversarial network or GAN) uses the reference picture and key points to generate the received image. This uses considerably less data, as it’s generating the image with information that’s already been sent. This means that better quality videoconferencing becomes far more accessible, even without high bandwidth connections. The GAN has examples of how a participant’s facial features move when speaking, and it artificially generates what it perceives as an appropriate example. Essentially when this comes into full use, on some connections your viewer won’t actually see your real facial movements, but a computer generated facsimile.

The positive uses do not stop there. If a user is giving a lecture but having to refer to something they are looking at off camera, the GAN can generate a moving image of them still looking directly at the audience. It also means that companies can have fully reactive computer generated images giving lectures. A GAN is what is called an unsupervised learning task. This is where AI is given a data-set with no labels and minimum human interaction. The GAN looks at said data, recognises patterns and irregularities and generates new examples that could conceivably match the data. The GAN has two sub-models, one generates the new examples from the data, and another which tries to figure out if they are real or generated examples. They continue in an adversarial game until the discriminator model is fooled around 50% of the time.

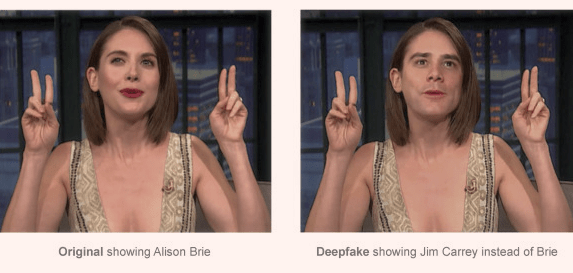

Obviously in the modern landscape the concerns over deep fakes (where mapping allows someone else’s face to be convincing transposed onto another) mean there are possible negative implications for this software. With all technological leaps, there are those whose motivations and intentions may not reflect the good nature of the designers. We as users have to be vigilant as this system comes into use.

Overall for businesses the innovations in videoconferencing seem to be keeping at pace with demand. We are fortunate that with the world now catapulted into a situation where videoconferencing is becoming the norm and a necessity for day to day operations; technology is making implementation more user friendly and cost effective.