Nov 22, 2018

Here at BusinessCom Networks, one of the most frequent tasks involved in providing broadband satellite services is estimating how much bandwidth to propose. A few clients know exactly what they need, but many require some assistance estimating how much capacity they should sign up for. Our bandwidth estimates for clients have risen steadily over the last 15 years and will likely continue to do so. We use different techniques depending on the size of the site, the type of bandwidth and the application requirements. Over the years, we have steadily reduced, for example, the number of basic internet users who can share 1 Mbps of bandwidth on our commercial services and be pleased with the performance. Will this trend continue?

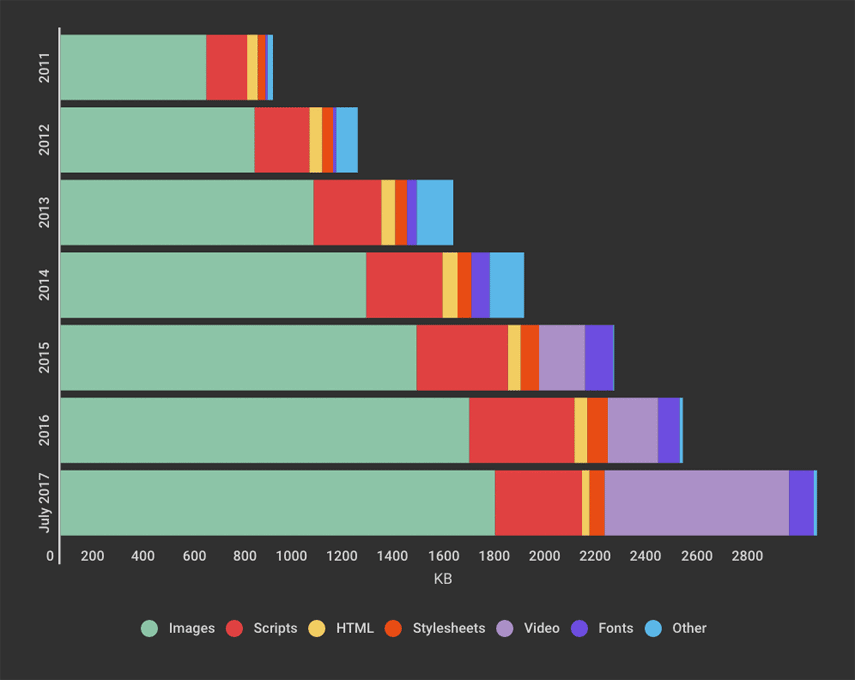

A key metric used to track web page traffic has been the “average page size.” Back in 2012, the average web page hit 1 MB. Today it is closing in on 4 MB. However, “average page size” may or may not be a useful metric to determine performance from the user’s perspective.

Average Page

The idea of an average page size is a web page that is representative of typical traffic patterns across the entire internet. It takes into consideration the payload size and distribution of different content types such as data, images, video, fonts, etc. It’s a central or typical value for the distribution of probable web sizes. It may be referred to as the center of the distribution. In other words, the normal web size page will consist of a central peak in which 50% of web pages will be larger, and 50% will be smaller.

Source: SpeedCurve

Source: SpeedCurve

For typical workstations, web page size can range up to 30MB+, while for mobile, it’s about 1/3 that at 10MB+. Given this huge range of potential web page sizes, what this really means is that there is no such thing as a “typical” website. The “average” is based on data sets but may or may not be indicative of a user’s performance perception. The concept of an average page size seems to have more value when looking at the internet from a historical perspective. While it seems like many pages are getting larger, some have gotten smaller. However, it is undeniable that as the number of images and embedded video grows, the average page size has grown with it.

Web Developers – Are You Aware?

Much of the increasing size is due to content designed to enrich the user experience, but part of it is laziness. Developers often assume the availability of high bandwidth internet access and don’t put a lot of effort into optimizing the delivery. These larger than necessary downloads are starting to be taken more seriously given the growth of mobile usage, which often lacks higher speed internet access.

As mobile use has exploded – passing desktop use and widening the gap – web developers have had to shift focus from desktops that could be assumed to have unlimited high-speed internet, to mobile users who often had lower speeds and usage-based billing and were thus more sensitive to growing page sizes. This has had some advantages, as developers leverage smaller screens that don’t need the same high resolution and look for other ways to reduce the download traffic. There are a variety of tools available to developers to compress images and video and utilize other techniques to limit or reduce page sizes. This is good, because tools that used to be able to do this externally are quickly being made obsolete.

What We Do

To address growing page sizes and growing performance demands in a time when satellite pricing was higher, BusinessCom Networks, quietly and uniquely, incorporated compression and optimization capabilities into our broadband satellite services. These tools work with HTTP. We re-compress images, optimize HTML, JavaScript, CSS and other web site code, and cache web content locally. We remove white spaces and compress the code. This gives us the ability to significantly reduce traffic over satellite links and therefore improve throughput for the client. However, web pages today are moving to HTTPS, which removes easy access to this traffic in order to optimize, compress or cache it. About half of all websites as of the time of this article, including many large corporate web sites, are still operating with HTTP, so these capabilities continue to offer an advantage in throughput and performance. More attention is therefore being directed at advanced DPI (deep packet inspection) tools to work with our network management capabilities, identifying and classifying traffic and prioritizing it accordingly. We continue working to optimize the user’s performance. What are the web developers doing?

What are the Developers Doing?

How much does growth in average page size really mean to performance, or the perception of performance in the first place? Many industry experts think we need a metric that takes “rendering” into consideration. After all, what really counts is the user’s perception of when a page is ready. Back in the old days, a page was rendered, when it was fully downloaded. This metric was used on all browsers and could be measured by third parties or users.

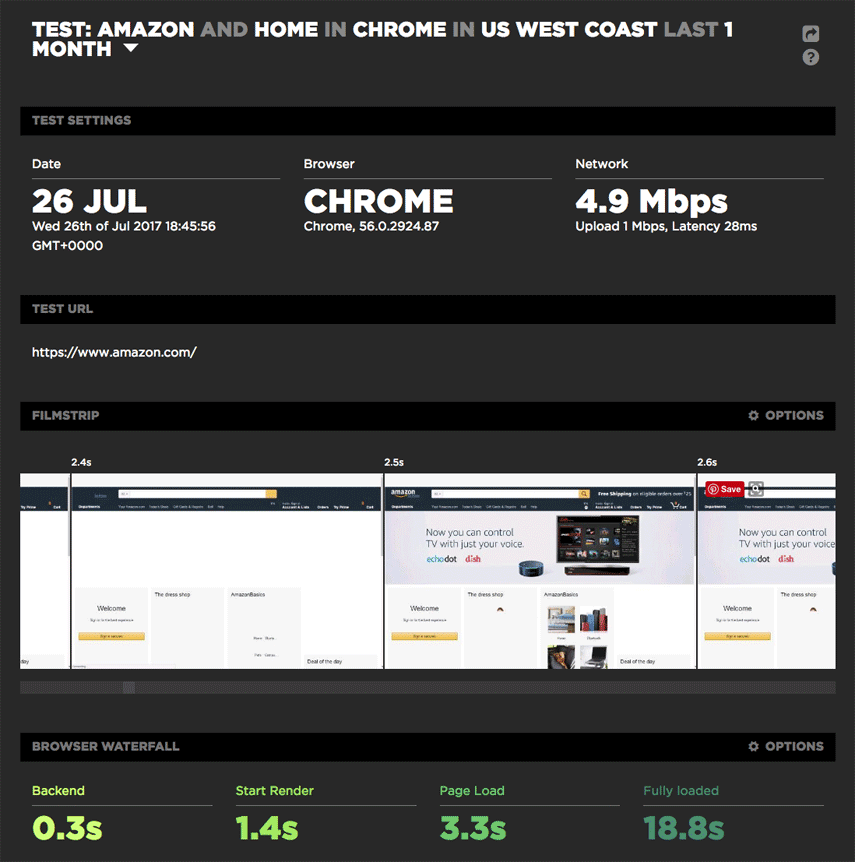

Today pages are considered rendered, often long before the download is finished. The user’s perception is that the page loaded fast, although the stats (window.onload) for full download are running behind. For example, in tests, Amazon product pages render quickly, with “above the fold” or upper front half of the page loading in 2 seconds, while the full download time is over 5 seconds. From the user’s perspective, the page loads quickly, and they can begin to view content as the page completes in the background. Web developers are beginning to use similar techniques to optimize user perception. By constantly monitoring the use of bandwidth by our clients, we are also able to gather historical data that helps us see the trends and stay ahead of them.

Source: SpeedCurve

Source: SpeedCurve

It’s All About Customer Satisfaction

BusinessCom Networks will continue to leverage compression and optimization features for HTTP sites and will continue to enhance high-precision bandwidth management capabilities using unique teleport solutions, along with our remotely integrated tools that provide end-to-end optimization. We offer enterprise class services with sharing ratios designed to assist in rendering pages quickly by providing high burstable speeds, while providing guaranteed consistent throughput for critical applications and real-time traffic like voice and video. Premium and dedicated services provide more guaranteed throughput as required by the client. Mostly we base our bandwidth estimates on feedback from clients and partners. Different markets also have different expectations. For example, a Venezuelan market consisting of many small mining operations that primarily use the services for financial and production data, will have different requirements than an Internet Café in a bustling city in Africa, full of students, doing research for term papers.

As a point of interest, there are techniques that would permit an ISP such as BusinessCom to leverage MITM (Man in the Middle) techniques that would allow us once again to go into HTTPS packets and optimize the traffic contained within. This essentially means being able to go within a secure SSL session in order to optimize the traffic contained within. Would clients be open to this kind of external optimization provided by independent ISPs, or prefer to be held hostage to the web developers? The industry is wrestling with this, given that HTTPS negated external optimization solutions.

The obvious alternative, aside from critical bandwidth management tools, and feedback from clients, is always – more bandwidth! The good news on that front, is that with ongoing launches of HTS (High Throughput Satellites), capacity in space is steadily increasing, and therefore bringing down the prices for the bandwidth that the average 4MB web page says you are going to need soon!